Introduction

The trend in recent years with the Internet - cloudification, app-based consumption and interactivity - in connection to rapidly growing our dependence for Internet-based resources for every-day actions, establish new set of requirements for Internet infrastructure - The network:

- High bandwidth

- Low round-trip delay between data producer and consumer

These two force network topology to become denser and more diverse.

The data producers and the consumers are predominantly in two different Autonomous Systems, which may (but not have to) be directly adjacent. For example, content provided DC belong to one AS that is connected to ISP backbone AS, which in turn is connected to AnA AS to which consumer device belongs.

Figure 1

The connection between AS is constructed at multiple (geographically diverse) sites, what serves resiliency requirements, producer-consumer latency (experience quality) requirement and mitigate cost of transmitting large amount of data on long distances. However, due to traffic growth, amount of data exchanged at single site becomes so large that the use of geo-redundancy for any failure becomes economically non-viable. Similarly, 1+1 redundancy schema at every site becomes too expensive.

Scale-Out peering

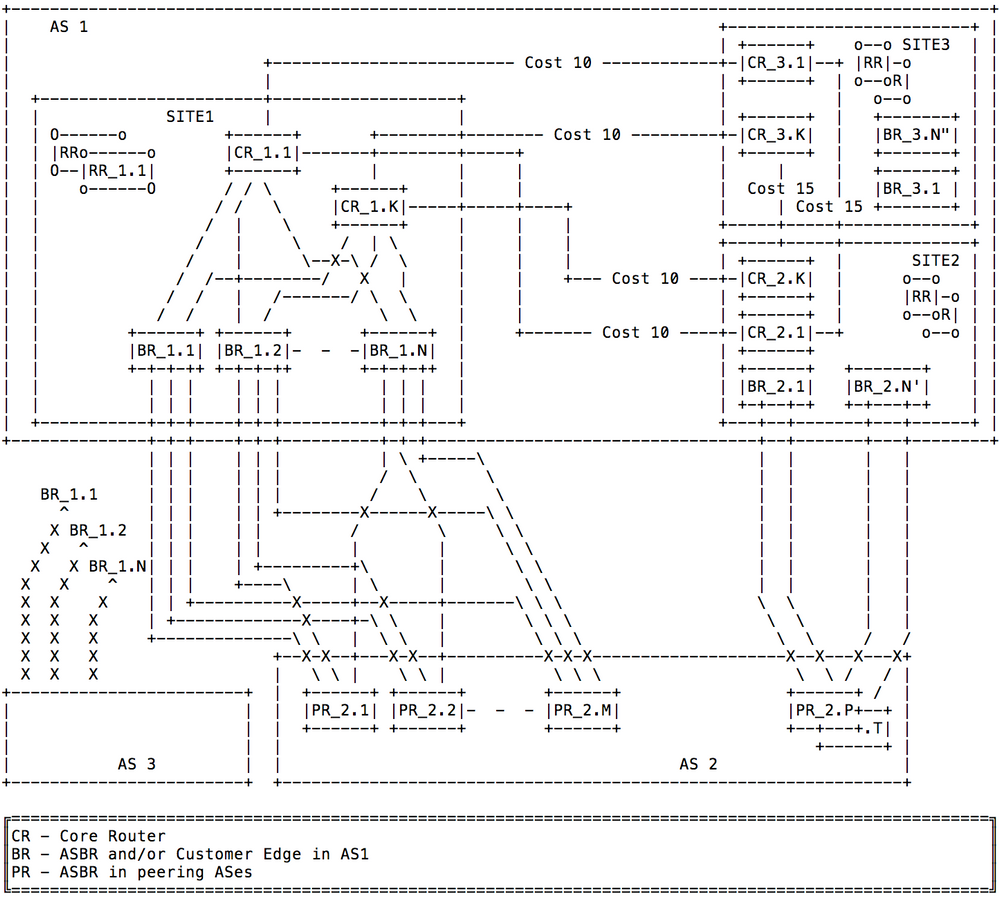

Therefore, some of the biggest providers explores concept of "scale-out" architecture at peering sites. In this architecture, multiple independent ASBRs are deployed on each of connected AS. This addresses on-site redundancy via N+1 model, plus brings other benefits such as: reduced day-zero capex, flexible capacity rollout and node failure risk diversification. These ASBRs are often connected in Leaf-Spine topology with Core Routers and augmented with per-site (pair of) BGP RR. (please see figure 2 below for example site 1)

The fundamental requirements in this architecture are:

- Keep traffic on path that has low round-trip-time (RTT) and,

- Utilize all peering links that offers same low RTT.

- Time needed to restore connectivity w/ all ECMP in-use and on low RTT paths shall be minimized.

Low RTT problem

The Internet inter-AS protocol - the BGP - does not provide mechanism to directly carry delay information. So, the general consensus on industry is that lower BGP metrics (shorter AS-path, lower MED, ext), augmented by lowest-cost IGP, provides some sense of delay visibility. This is a bold assumption, but only generally applicable (provided there is no mutual agreement between peering AS). At this point, we just assume that BGP path that have lower metric/cost provides lower RTT, and paths that are equal in terms of metric/cost (as-path length, local-preference, MED, IGP cost to BGP PNH, etc) provides same RTT.

All equal cost path utilization

In order to use all links between peering AS that provides same BGP path costs to destination prefix, BGP speakers need to be enabled for multi-path operation at minimum. Additionally, all (AS ingress) BGP speakers need to know at least all equal and best path to destination via multiple ASBRs. This is native when all BGP speakers are fully-meshed by iBGP sessions. However, such iBGP full-mesh is rather impractical in scale-out architecture due to high total number of ASBRs.

The well-known techniques to deal with full-mesh scale challenge - Route Reflections and Confederation - truncate path information as they (by default) advertise to clients/between sub-AS, only ONE, best (and active) BGP path. While this helps in keeping path and session scale manageable, it makes BGP multipath unusable. The BGP ADD-PATH between RR and it's client (or among sub-AS) is used to enable BGP multipath.

Scale-out architecture summary

In summary, for scale-out peering architecture:

- The BGP multipath need to be enabled on all iBGP sessions inside AS

- The BGP multipath need to be enabled on all eBGP sessions of each ASBR

- The BGP ADD-PATH need to be enabled on all iBGP sessions.

- RR need to be able to send multiple path per prefix. The upper limit depends on:

- Maximum number of ASBR per site (say N).

- May depend also on maximum number of eBGP session hold by single ASBR with single peer AS (say M), depending on BGP PNH configuration.

- RRC/ASBR may need to be able to send multiple path per prefix if BGP PNH configuration is of "NH unchanged". The upper limit depends on maximum number of eBGP session hold by single ASBR with single peer AS (say M).

For further consideration this is the network diagram that will be used for reference below:

Figure 2

IBGP with Next-Hop unchanged

One of the standard BGP configurations is the one in which ASBR, when advertise externally learned prefix into iBGP, do not modify BGP PNH. So BGP PNH is set to IP address of interface on external peering router. The strength of this technique is shorter time needed to restore connectivity w/ all ECMP in-use and on low RTT paths. The drawback is extremely high BGP RIB scale - proportional to number of inter-AS links.

EXAMPLE:

Let assume that in network as on Figure 2, all PR2.x of AS2 advertise same set of prefixes on all sessions to AS1.

If BR1.1-BR1.N and BR2.1-BR2.N' advertise only one path per prefix to its RRs, as result of ADD-PATH among RR's, BR's and CR's

, at site 3 the BR's and CR's learns N+N' path's per prefix learned form AS2. This is sufficient to equally distribute load among all N ASBR's on site 1 (note IGP cost between site 2 and site 3).

However, when interfaces over which all BR1.1-BR_1.N learned their best and active path (say interfaces to PR_2.1 in all cases; result of node failure) become unavailable, the routing to BGP PNH - IP address of PR_2.1 interface - is removed from IGP. The BGP speakers at other sites (BR_3.x) will react by temporarily direct traffic to site2 (BR_2.1-BR_2.N'). This switchover may happen in sub-second time, in prefix-scale-independent manner, thanks to techniques commonly known as BGP PIC EDGE. As result traffic is on not the lowest cost path, as connection from Site1 to AS2 is not entirely broken (links to PR_2.2-PR_2.M are operational).

Then all BR1.x will send update to RR with new best path (say form PR_2.2) for each prefix (100.000 of them), triggering global convergence, which may take 10's of minutes and is hard to predict.

In above example, BR's, RR's (and in possibly CRs) keep N+N' path per prefix (N form site 1, and N' form site 2). Provided N=N'=4, this make 8 path per prefix.

The solution for sub-optimal routing right after failure would be enabling BR's to advertise multiple path to its RR and the propagate it to all other RR and then BR. So, each of BR1.x at site 1 will advertise M paths (from PR_2.1-PR_2.M), RR1.x will have N*M ECMP bast path and advertise it other sites (site 3). In result BGP speakers at other sites (BR3.x at site 3) is provided with N*M paths per prefix form site 1 and N'*(M') from site 2. Therefor to achieve optimal routing immediately after failure, considerably higher scale of BGP path need to be handled. If M=N=N'=M'=4 then for each prefix we have 16 best path and 16 non-best. If AS2 advertise 100k prefixes, this become 3.2M paths.

Although this solution provides a mean of fast, prefix-scale -independent traffic switchover, it does it only if ASBR external interface goes down, which triggers IGP event. In case eBGP session fails but underlying interface remain up (misconfiguration, S/W defect, etc), recovery still requires per-prefix withdrawal/update which could take 10's of minutes at high scale.

IBGP with Next-Hop-Self

The other common technique is to modify BGP PNH to "self" (IP address) when BR advertise path to RR. This technique allows it to reduce the number of path per prefix, while keeping optimal forwarding - least cost and ECMP - in case of failure discussed above (e.g. PR_2.1 node failure). Actually, because IP addresses of BGP PNH as seen by other BGP speakers, do not change in reaction to failure event, and is resolvable by IGP, there is no need to reprogram FIB at all. Unfortunately, another failure - loss of all connectivity between single BR (say BR1.1) and peer AS (all PR's in AR2) would not be handled quickly. As BGP PNH advertised by BR_1.1 is not changed and is reachable by IGP (loopback), all BGP speakers in AS1 (BR's, CR's) will keep BR_1.1 as one of exit points until it receives BGP withdraw on prefix-by-prefix basis. This is a global convergence process that at high scale can take minutes, during which packet may be discarded or looped.

The BGP Abstract Next-Hop

The Abstract Next Hop concept presented below does not require any changes to BGP protocol itself. It is an architectural solution to network configuration, that uses existing protocols capabilities which allows to achieve higher scale, faster routing convergence in network when scale-out peering sites exists.

When BGP speaker advertise path to its iBGP peer, it modifies Protocol Next-Hop attribute to value of Abstract-NH.

The Abstract-NH is just an ip-address that identifies the BGP session or a "set of BGP sessions".

The "set of BGP sessions" is defined by operator in local configuration, according to network design needs. e.g:

- "set of BGP sessions with same peer AS and handled by given single ASBR" or,

- "set of BGP sessions with same peer AS and handled by one or more of ASBR at given site", or

- "set of BGP sessions with any of upstream provider AS", or

- "set of BGP sessions with given peer Device and handled by one or more of ASBR of local AS"

A route to ANH/32 is installed in relevant RIB and redistributed into IGP/LDP (Transport-protocols).

BGP maintains the ANH/32 route based on the peer's bgp session state:

- As soon as bgp session (all sessions in of the set of sessions) goes down for any reason, the ANH/32 route is removed.

- On bgp session (at least one sessions in of the set of sessions) up, the ANH/32 route is created only after initial-route-convergence is complete for the peer (e.g. EOR is received).

This ensures that as soon as (last in set) eBGP session went down, that ingress-router will see a BGP protocol next-hop unreachablity event (from IGP/LDP) for ANH1/32, and it can converge to send traffic to the alternate/new-best PNH. It also ensures that as soon as (first in set) eBGP session comes UP and receives EoR marker, that ingress-router will see an BGP protocol next-hop reachability event (from IGP/LDP) for ANH1/32, and it can converge to send traffic to the it as new-best PNH.

The Value of ANH IP address

It can be any value that operator wants to assign based on IP-address management. E.g. ANHx can be PeerX's lo0-address, which is to represent "single eBGP peer device connected to local AS". When same ANH is used to represent a "set of BGP sessions", it also reduces route-scale and routing-churn in the IBGP-network.

Use of Abstract Next-Hop in scale-out peering design

In traditional way of configuration described above, meaning of BGP PNH is precise - it is:

- [egress interface] for given prefix in case of NH-unchanged configuration, or

- [egress ASBR] for given prefix in case of NHS configuration.

The meaning of Abstract Next Hop is more contextual. This document describes network configuration when BGP PNH identify:

- [egress ASBR, Peer AS] pair. Therefore, IP address of this ANH should exist in IGP if, and only if, given egress ASBR has at least one eBGP session in ESTABLISHED state with given Peer AS, and EoR marker has been received on this session. Lets call it ASBR-PeerAS Abstract Next Hop (AP-ANH).

- [egress Site in local AS, Peer AS] pair, regardless of number of ASBRs. Therefore, IP address of this ANH should exist in IGP if, and only if, at least one ASBR of given site has at least one eBGP session in ESTABLISHED state with given Peer AS, and EoR marker has been received on this session. Lets call it Site-PeerAS Abstract Next Hop (SP-ANH).

Please note: Reachability of ANH address in IGP depends on eBGP session state and not inter-AS interface state. (Although in certain deployment scenarios, interface state may impact session state).

How IP route to ANH address is instantiated on ASBR and inserted into IGP/LDP on particular vendor device is matter of local implementation.

EgressASBR-PeerAS abstract NH (AP-ANH)

The AP-ANH has value unique to ASBR and its peering AS. For example, in network as in Figure 2, BR_1.1 would have 2 AP-ANH assigned - one for AS2 and other for AS3. Similarly, BR_1.2 would have two AP-ANH - one per peer AS, and they are different then AP-ANH of BR_1.1. And so on. All AP-ANH are exported into IGP by ASBRs.

The ASBR advertises only one path per prefix to its RR, with BGP PNH set to appropriate AP-ANH. Then, RR will propagate it through entire AS by mean of iBGP ADD-PATH. In consequence, the number of path learned per-prefix is equal to number of ASBR's servicing given peer AS.

In example network in Figure 2, for AS2 prefixes, this would be N+N' (from site_1 + from site_2) path per prefix. This sets scale requirements of this solution on pair with Next-Hop-Self one.

However, thanks to properties on abstract NH, more failures are covered by prefix-independent technique - through removal reachability of BGP PNH (AP-ANH) from IGP.

Provided that all ASBRs in given site (site1 in Figure 2) receives same routing information from peerAS (AS2), in non-faulty conditions, one could consider to set the ANH value on all ASBR the same value and export it to IGP/LDP. However, failure(s) can create situation when multiple ASBRs will have a session in ESTABLISHED state with given peer AS, but some addresses/prefixes would be learned from eBGP only on subset of this ASBRS, the rooting loops will be formed. To prevent this situation, the per-ASBR AP-ANH needs to be advertised into IGP/LDP and ASBRS needs to set it as PNH when advertise routes to site's Route Reflectors. However, for iBGP path advertisement leaving site (into RRs mesh), BGP PNH may be alternated to another ANH value - Site-PeerAS ANH.

The Site-PeerAS Abstract NextHop

The AP-ANH, as defined above, works on ASBR level. From given local AS perspective, the number of ANH is proportional to the number pairs of ASBRs and ASes each of then peers with.

With 100's of peerAS, 10's of sites and ~10 ASBR per site, the number of AP-ANH may scale up to the 1000's. At the same time, paths visibility at every BGP speaker in network, with granularity of egress ASBR may not be required and even sub-optimal.

With symmetrical multiplane backbone and/or Leaf-Spine design, for BGP speakers on other sites, it is sufficient to have information that the given site (site1 in Figure 2) has at least one ASBR with ESTABLISHED session to peerAS (AS2). For example, in network as in Figure 2, even if BR3.1 has only one path with PNH=(ANH of BR1.1), BR3.1 resolves PNH in IGP and spread traffic among all CR's on site 3. So then traffic will be delivered to CR1.x at site 1. As long as this CR's (CR1.x) has visibility to all paths (with PNHs representing all ASBR's - BR1.x), traffic will be distributed equally in Leaf-Spine topology to all site 1 ASBRs.

At the same time, when multiple paths are available on BGP speakers, every change is propagated and causes S/W processing on all BGP speakers across network (even if this change does not impact any forwarding). For example, in network in Figure 2, even if BR3.1 has N path with PNH=(ANH of BR1.1-BR1.N), BR3.1 resolves PNHs in IGP and spread traffic among all CR's on site 3. When one egress ASBR (say BR1.2) loses its connectivity to peerAS, the respective path is withdrawn from all BGP speakers (e.g. BR3.1) of the network. All BGP speakers perform path selection and possibly update its forwarding data structures (what is unnecessary global churn in network). The actual forwarding path does not change.

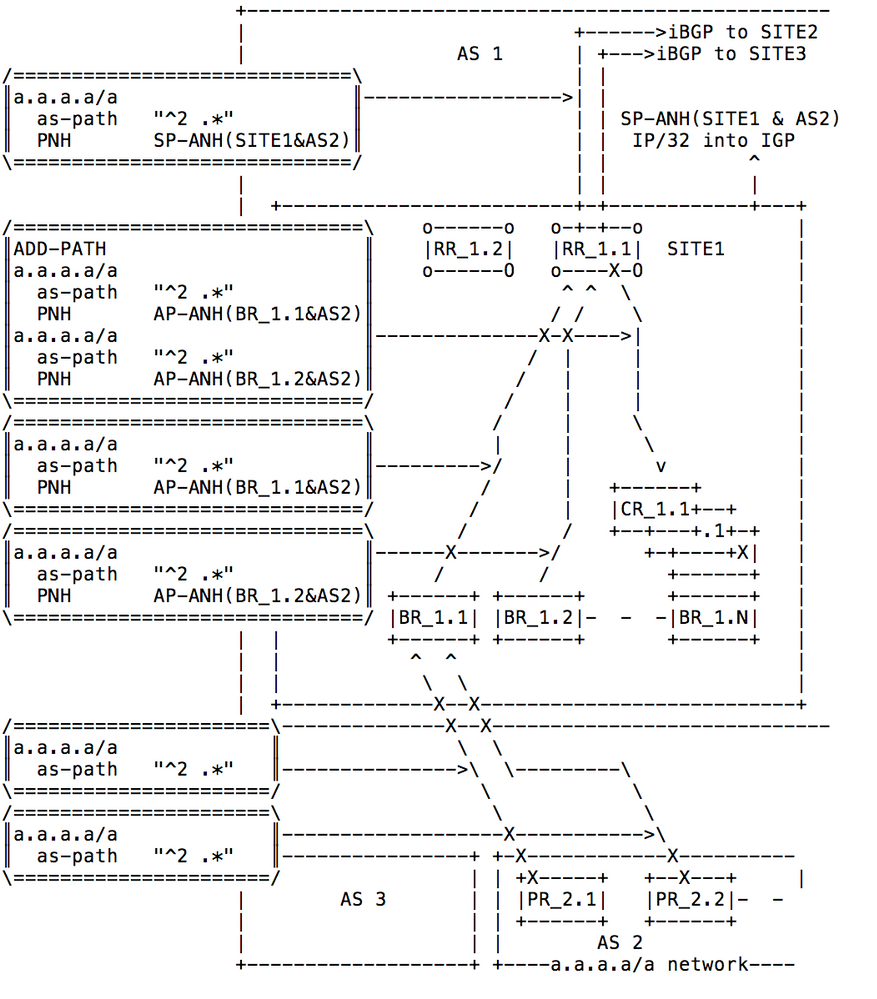

To avoid the above challenges of AS wide scope, the RR on given sites (site1 in Figure 2), when re-advertise BGP path learned from its ASBR clients, modify BGP PNH to another abstract value - Site-PeerAS Abstract NH(SP-ANH). This value is unique per [site, peerAS] pair, and shared by both RR of given site. With this modification, it is sufficient that inter-site iBGP session will carry only one path per prefix (no ADD-PATH needed). Consequently, BGP RIB scale is reduced significantly.

The BGP speakers in other sites of AS1 needs to resolve SP-ANH in order to build its local FIB. Therefore PS-ANH has to be present in IGP - some router(s) in local site (RR, ASBR or CR) need to inject it to IGP. While the selection of the role that is responsible for SP-ANH injection is discussed below, in any case, The SP-ANH should be reachable in IGP if, and only if, at least one of AP-ANH (for same peerAS and ASBR belonging to given site) is reachable.

Figure 3 below illustrates the routing information flow in network as in Figure 2.

Figure 3

Native IP networks

In this network, every router, (including CR) has full BGP routing information and forward each packet based on destination IP lookup. Provided that all routers on egress site receives multiple paths with BGP PNH set to AP-ANH (and not SP-ANH), it is in the matter of the operators decision which node - RR, ASBR or CR - will inject SP-ANH route into IGP.

One may argue that injection of SP-ANH on ASBRs may be simpler, as it will be done by same mechanism/procedure/policy as injection of AP-ANH. Others may prefer injection at RR, as it limits the number of configuration touch-points.

MPLS

Identical BGP address space and path received on all ASBR's

In the MPLS network, as traffic is carried over LSP tunnels, the SP-ANH need to be injected into IGP by node that has HW and SW capability to perform IP lookup. This eliminates RR, and possibly CRs (in "BGP/Internet-free core" architectures).

Instead all ASBR are used to insert SP-ANH address into IGP.

In case of LDP-based networks, this is sufficient. The CR will create ECMP forwarding structure for labels of SP-ANH FEC, coming from other sites.

In RSVP-TE based networks, ECMP needs to happen on ingress LSR, therefore, every BGP speaker needs to establish LSP to every ASBR. If SP-ANH is used as an RSVP destination, some other mean (e.g. affinity groups) needs to be used to ensure desired 1:1 LSP to egress ASBR mapping.

Different address space sets or paths received on different ASBRs

In case a set of prefixes received by one of ASBRs is different then the other one (from same peer AS), combination of SP-ANH and MPLS-based LB on CR, may lead to a situation where the IP packet can land on a "wrong" ASBR. Similarly, if paths attributes for given prefix received by one ASBR is different then the other, again, the packet can land on "wrong" ASBR.

In this case ASBR would use an iBGP route it learned from other ASBR of same site (via RR, with AP-ANH) and forward traffic over LSP turner to the "correct" one. If such "asymmetry" or prefixes learned from PeerAS is temporal (result of fault or error) it could be acceptable (it is up to provider to decide).

However, if such "asymmetry" is considered normal and permanent, the better solution would probably be to insert SP-ANH into IGP on CR(s). In this case, CR need to perform forwarding based on destination IP lookup. Therefore CR(s) have to learn and handle large-size IP FIB - at least all prefixes learned from peer AS by local ASBRs.

SPRING

Identical BGP address space and path received on all ASBR's

For SPRING based networks, unique capability of ANYCST-SID is coming into play. The ASBRs of single site allocate ANYCAST-SID for SP-ANH address.

This SID can be used as only SID by ingress bgp speaker or, if TE routed path is desired, depending on TE constrains for LSP, TE controller can provision SPRING PATH with ANYCAST-SID at the end, instructing CR to perform LB among connected ASBR's.

Different address space sets or paths received on different ASBRs

Similarly to classic MPLS environment, such situations may lead to suboptimal routing (redirecting from one ASBR to another), or requires CR (instead of ASBR) to insert SP-ANH into IGP and generate PREFIX-SID (or ANYCAST-SID if there is more then one CR) for it.

Failures analysis

For the analysis below, let us assume that each ASBR inma given site of local AS (site 1 of AS1 in Figure 2), that has eBGP session with given peer AS (AS2 in Figure 2), receives from peer routers (PR2.x) routes to exactly the same address space on each session.

Failure of one out of 2+ eBGP sessions with given peerAS on single ASBR

- Impacted ASBR keep advertising AP-ANH IP address into IGP, as at least one session to peer AS remains in ESTABLISHED state.

- Impacted ASBR may send UPDATE to RR, however PNH remains same and equal to pre-failure AP-ANH

- The RR may send UPDATE its clients (CR's, BR's) and to RR in other sites, however PNH remains same and equal to pre-failure: AP-ANH and SP-ANH respectively

- As PNH do not change, there is no changes in forwarding data structure (FIB) on any of BGP speakers across network, except possibly ASBR that holds impacted session.

Failure of one out of 2+ eBGP sessions with given peerAS on all ASBR of given site

- Impacted ASBRs keep advertising AP-ANH IP address into IGP, as at least one session to peer AS remains in ESTABLISHED state.

- Impacted ASBRs may send UPDATE to RRs, however PNH remains same and equal to pre-failure AP-ANH

- The RRs may send UPDATE its clients (CR's, BR's) and to RRs in other sites, however PNH remains same and equal to pre-failure: AP-ANH and SP-ANH respectively

- As PNH do not change, there is no changes in forwarding data structure (FIB) on any of BGP speakers across network, except possibly ASBRs that holds impacted session.

Failure of All eBGP sessions with given peerAS on single ASBR; Failure of single ASBF node failure"

- Impacted ASBR stop advertising AP-ANH IP address into IGP, as it lost all sessions with given peerAS.

- The SP-ANH is kept reachable in IGP.

- All other BGP speakers at impacted site invalidate all path with PNH equal to AP-ANH representing impacted ASBR. This may trigger prefix-independent FIB data-structure patching/temporal fixing for sub-second traffic restoration.

- Impacted ASBR sent WITHDRAW to RRs.

- The RRs:

- Send WITHDRAW its clients (CR's, BR's) for path form impacted ASBR. As this sessions supports ADD-PATH, paths from other ASBR's will remain. Other BGP speakers as this site have to modify its FIB.

- May send UPDATE to RRs in other sites, however PNH remains same equal to pre-failure - SP-ANH. As PNH do not change, there is no changes in forwarding data structure (FIB) on any of BGP speakers across network, except one at impacted site.

- The routing churn is limited to single peering site, and not propagates across network.

All eBGP sessions with given peerAS on single ASBR

- All ASBR stop advertising AP-ANH IP address into IGP, as it lost all sessions with given peerAS.

- The SP-ANH is no longer reachable in IGP, as none of AP-ANH are reachable.

- All other BGP speakers across network invalidates all path with PNH equal to AP-ANH / SP-ANH representing impacted ASBR / Site. This may trigger prefix-independent FIB data-structure patching/temporal fixing for sub-second traffic restoration.

- Impacted ASBR sent WITHDRAW to RRs.

- The RRs sends WITHDRAW to its clients (CR's, BR's) and RR's in other sites for path form impacted ASBR. As this sessions supports ADD-PATH, paths from ASBR's at other sites will remain. The BGP speakers across network may need to modify its FIB.

JUNOS

In the next article, a How-to instantiate Abstract NextHops in JUNOS, and how to configure above scale-out peering solution will be covered. Stay tuned.

Acknowledgements

The work leading to this document was heavily contributed by Kaliraj Vairavakkalai and Natrajan Venkataraman. Thank you.